Model architectures and training data Midjourney and DALL·E are built on large-scale diffusion and transformer-based generative techniques, each optimized differently. DALL·E 2 and DALL·E 3 use CLIP-like guidance and autoregressive or diffusion pipelines with extensive paired image-text datasets curated by OpenAI. Midjourney employs proprietary diffusion models fine-tuned for artistic outputs using community feedback and custom prompt parsing. Differences in training corpora—the balance of licensed, public, and synthetic images—affect visual priors, color palettes, and subject rendering. Datasets influence photorealistic fidelity and object consistency; Midjourney’s dataset choices emphasize stylization and compositional variety. Architectural choices, noise schedules, and conditioning mechanisms also shape outcome quality and responsiveness to prompts.

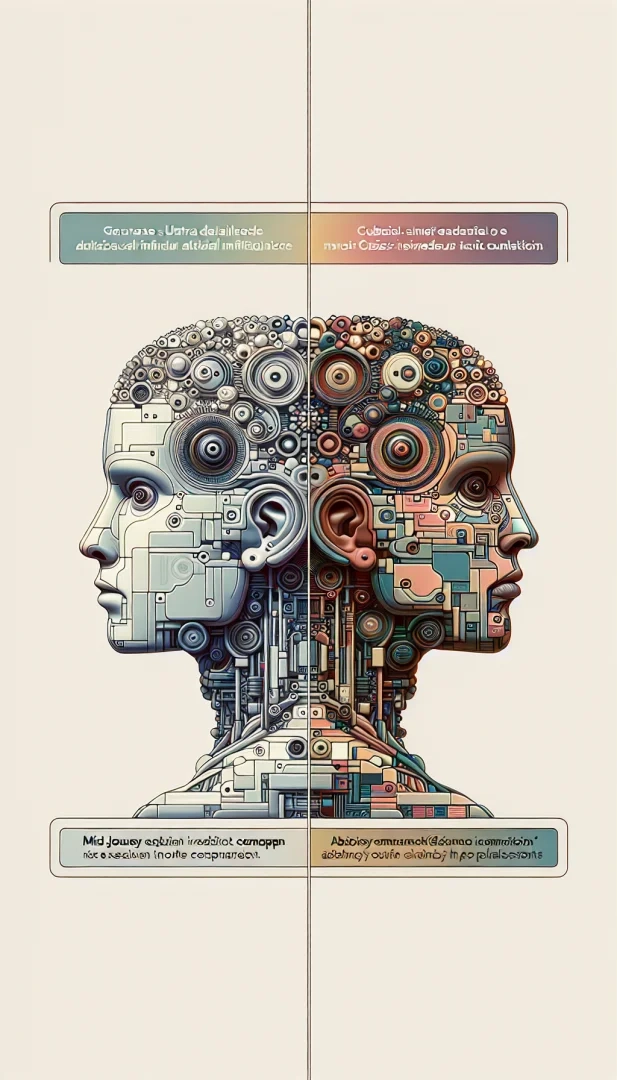

Image quality comparison Resolution, detail retention, and artifact control vary between platforms. DALL·E tends to produce cleaner, more photorealistic images with accurate lighting, shadows, and perspective when prompted for realism. Midjourney often delivers higher perceived detail through painterly textures, dramatic contrast, and purposeful imperfections that artists find appealing. In close-up renders, DALL·E’s object edges and materials are frequently crisper, while Midjourney can introduce ornate ornamentation and flourish. Compression, upscaling algorithms, and post-generation enhancement features provided by each service also alter the final quality users receive.

Stylistic versatility and aesthetic control Midjourney excels at artistic styles: fantasy, concept art, illustration, and stylized photography. It responds strongly to creative adjectives and art-historical references, producing visually cohesive, mood-driven outputs. DALL·E offers broad stylistic coverage too, but leans toward faithful reproduction of photographic and realistic aesthetics when precision is requested. Both systems interpret style tokens differently; Midjourney’s model often magnifies stylistic cues while DALL·E moderates them for plausibility. Users seeking brand-consistent, repeatable aesthetics may prefer Midjourney for expressive campaigns and DALL·E for product visualizations requiring uniformity.

Photorealism and realism metrics Photorealism hinges on accurate anatomy, natural lighting, realistic materials, and lens-based artifacts like depth of field and bokeh. DALL·E typically achieves higher realism scores on metrics that measure object fidelity and background coherence. Its outputs often exhibit consistent human features, believable skin tones, and correct reflections. Midjourney can reach photorealistic levels but sometimes sacrifices strict accuracy for mood, producing slightly exaggerated facial expressions or compositional stylings. Quantitative evaluations using FID, LPIPS, or human perceptual tests show platform performance depends heavily on prompt specificity and subject complexity.

Prompting strategies and control parameters Effective prompting differs across systems. Midjourney users leverage short, evocative prompts combined with model-specific parameters like aspect ratio, stylize, and quality tags to nudge artistic interpretation. DALL·E users tend to craft descriptive, precise prompts emphasizing photographic terms—camera type, lens focal length, film stock, lighting direction—to achieve realism. Iterative refinement, reference images, and negative prompts help both models avoid unwanted artifacts. ControlNet-style conditioning and inpainting features available in some interfaces give additional control over pose, composition, and occlusions, improving reproducibility for professional workflows.

Handling people, faces, and text Face rendering and text synthesis remain challenging. DALL·E shows strength in rendering coherent facial features and consistent identity across variations, although it also enforces safety filters that limit generation of public figures. Midjourney produces compelling portraits with expressive lighting and posing but may introduce morphological inconsistencies when generating multiple images of the same person. Generated text within images is generally unreliable on both platforms; lettering often appears distorted unless specific raster editing or vector-based postprocessing is applied.

Speed, cost, and iteration workflow Generation speed and pricing influence practical adoption. Midjourney operates via a subscription model with different tiers and fast vs relaxed modes; iteration is often rapid within chat-based interfaces. DALL·E is accessible through API or platform tokens, with cost per image influenced by resolution and usage. For high-volume prototyping, DALL·E’s API flexibility and integration options suit automated pipelines, while Midjourney’s community-driven Discord workflow encourages creative exploration and social iteration. Batch generation, upscaling, and variant creation tools determine how quickly designers can converge on a final asset.

Use cases and recommended choices Choose DALL·E for product mockups, realistic advertising, architectural visualizations, and scenarios where accurate materials and perspective matter. Choose Midjourney for concept art, mood boards, character design, and marketing assets that benefit from distinctive artistic voice. For hybrid projects, combine both: generate photorealistic base assets with DALL·E, then apply Midjourney for stylized reinterpretation, or reverse—create artistically rich concepts in Midjourney and refine composition and realism in raster editors or DALL·E inpainting.

Ethical considerations and bias Both platforms reflect biases present in training data. Skin tone representation, cultural artifacts, and gendered stereotypes can emerge unless prompts explicitly request diverse or neutral portrayals. Copyright and style mimicry are ongoing legal and ethical concerns; creators should verify usage rights and avoid directly impersonating living artists. Transparency about generated content and adherence to platform terms and local regulations is paramount for responsible deployment.

Hands-on tips for better results – Start with a clear goal: decide between realism or stylization before prompting. – Use camera and lighting terms for photorealism; use art movement and material descriptors for stylized outputs. – Include negatives to suppress undesired elements. – Iterate: generate multiple seeds and select best candidates for upscaling or inpainting. – Combine tools: use Midjourney for imagination and DALL·E for precision, then polish in an image editor.

Technical interoperability and future trends Interoperability through standard prompt formats, shared conditioning tools, and cross-platform workflows will grow. Expect improvements in multimodal consistency, person-specific models, better text rendering, and reduced hallucination. Model ensembles that merge Midjourney’s artistic strengths with DALL·E’s photorealism could provide best-of-both-worlds solutions for creatives and enterprises.

Practical checklist for evaluation – Define fidelity requirements: list necessary resolution, realism, and consistency constraints. – Test with multiple prompts: run 5 to 10 seed variations and compare outputs side by side. – Assess editability: prefer images that cleanly accept inpainting, masking, or vectorization. – Evaluate licensing and export formats: confirm commercial use allowances and metadata retention. – Monitor model updates: performance and safety features evolve, so re-evaluate periodically to maintain quality and compliance.

Rapid prototyping recommendation – For tight deadlines, generate low-res batches, pick top candidates, then upscale and refine saving time without sacrificing creative exploration.

Final advice – Keep records of prompts, settings, and licenses to reproduce results.